SkyScan: Transformer-Powered Aerial Object Detection

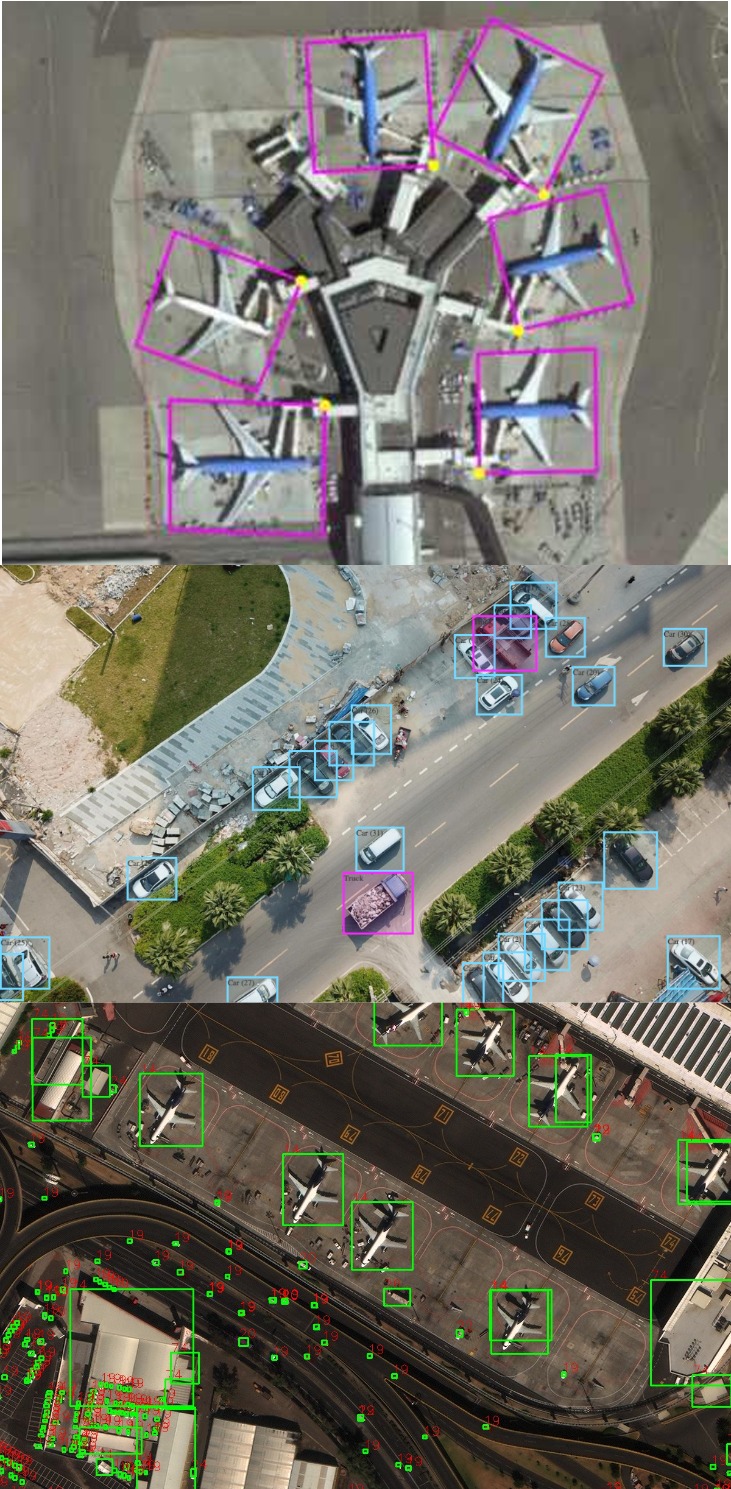

SkyScan is a fast aerial-object detector for drones and satellites. It aims to keep the speed of a YOLO-style detector while improving how well we pick up tiny, crowded, and rotated targets by adding lightweight transformer-based heads. In practice, we handle large aerial photos by splitting them into overlapping tiles, running detection on each tile, and then merging the results back together with non-maximum suppression so duplicates are removed.

The detector is designed for practical use: simple to train, easy to evaluate, and realistic about hardware limits. We will use standard datasets—DOTA (oriented boxes and polygon IoU), VisDrone (crowded street scenes with COCO mAP@[0.50:0.95]), and xView (satellite with many small classes)—and always report the dataset, metric, input size, and hardware so accuracy (AP) and speed (FPS) are fairly compared. SkyScan should shine in busy scenes like parking lots, intersections, or harbors; tough cases like low light or heavy blur can be helped with tiling, modest super-resolution, or a simple tracker.

Image source: Freepik — Top-down aerial parking lot

Datasets & Evaluation Metrics

DOTA (oriented boxes)

DOTA uses oriented bounding boxes for ships, planes, and bridges. Images are huge, so typical pipelines tile → infer → merge. The official evaluation measures polygon IoU.

VisDrone (drone imagery)

VisDrone is crowded with tiny objects (pedestrians, cars, bikes). Main score: COCO-style mAP@[0.50:0.95] which rewards precise localization.

xView (satellite)

xView covers satellite scenes with many classes. Challenge reports PASCAL-style mAP. Objects can be extremely small; tiling or super-resolution helps.

- Key metrics: IoU measures overlap; AP50 is forgiving; AP@[.50:.95] is stricter; mAP is the class mean. Always state which one.

Image sources: DOTA · VisDrone · xView

Architecture & Key Ideas

we keep a YOLO-style backbone for speed and an FPN-type neck to fuse multi-scale features. On top, a small transformer head (2–3 lightweight layers with deformable/pointed attention) gives each location a little context from nearby regions. This boosts recall on tiny, crowded, or rotated objects without making the model heavy.

- Backbone: compact CNN (YOLO-sized) for real-time features

- Neck: FPN/PAFPN to combine 3–4 scales

- Head: transformer → YOLO-style conv heads (class, objectness, box)

- Boxes: axis-aligned by default; optional rotated

(cx, cy, w, h, θ)for DOTA/xView - Inference on big images: overlapping tiles → merge detections → (oriented) NMS

Why this design? YOLO gives the speed budget for drones; a tiny transformer head adds just enough context to separate packed objects and recover small ones. Tiling makes huge aerial frames practical without a huge GPU.

Performance & Scalability (Accuracy vs Speed)

How we test (simple): same dataset, same image size/tiles, and same hardware for all models. We report AP (accuracy) and FPS/latency (speed).

- YOLO: fastest baseline.

- SkyScan: almost as fast, usually better on tiny/crowded or rotated objects.

- Deformable DETR: more context, usually slower.

Trade-offs: bigger input → more AP but less FPS; smaller/INT8 models → more FPS but a little less AP.

Challenges & Limitations

- Data & labels: Oriented boxes are harder to annotate; datasets can be imbalanced; domain shift (rural vs urban) hurts generalization.

- Tiny & crowded: Objects may be just a few pixels; occlusion/blur common. Use tiling, smart crops, or super-resolution.

- Real-time on edge: Limited compute/power on drones. Prefer light backbones, quantization, careful input sizes.

Vision–Language Models (VLMs) & What’s Next

Open-Vocabulary Detection (OVD) can find classes never seen in training using text prompts (e.g., “lifeboat”, “solar panel”). Models like Grounding DINO, OWL-ViT, and GLIP bring text–image alignment. For aerial scenes, research adapts these ideas to tiny, oriented objects. Promising for rare targets and new regions.

- Consider sensor fusion (RGB + thermal/LiDAR) for night/fog.

- Distill big VLMs into lighter detectors for drones; cache text embeddings on device.

Quick Quiz

Three questions to check understanding. Click Grade to see your score instantly.

Annotated Bibliography (in simple words)

References are listed in a practical order (datasets → classical/YOLO → transformers → tiling/NMS → VLM/OVD). Each entry has a short synopsis and a reliability note.

- Xia, G., et al. (2018). DOTA: A Large-scale Dataset for Object Detection in Aerial Images. CVPR.

Link: PDF · ↩ back

Synopsis: Standard aerial benchmark with oriented boxes; very large images (tiling needed).

Reliability: Top-tier conference (High). - Lam, D., et al. (2018). xView: Objects in Context in Overhead Imagery.

Link: PDF · ↩ back

Synopsis: Satellite dataset with many classes and tiny objects; PASCAL-style mAP often used.

Reliability: Widely used public dataset (High). - Bochkovskiy, A., Wang, C.-Y., Liao, H.-Y.M. (2020). YOLOv4: Optimal Speed and Accuracy of Object Detection.

Link: PDF · ↩ back

Synopsis: Strong one-stage baseline; tricks like Mosaic/CIoU helpful for small objects.

Reliability: Popular arXiv paper; heavily reproduced (High). - Wang, C.-Y., et al. (2022). YOLOv7: Trainable bag-of-freebies sets new state-of-the-art.

Link: PDF · ↩ back

Synopsis: Efficient detector variants; useful real-time baselines for drones.

Reliability: Widely cited community paper (High). - Carion, N., et al. (2020). End-to-End Object Detection with Transformers (DETR). ECCV.

Link: PDF · ↩ back

Synopsis: Set prediction with transformers; simple pipeline, more context, slower to train.

Reliability: Top-tier conference (High). - Zhu, X., et al. (2021). Deformable DETR: Deformable Transformers for End-to-End Object Detection. ICLR.

Link: PDF · ↩ back

Synopsis: Sparse multi-scale attention; trains faster; better for small objects than vanilla DETR.

Reliability: Top-tier conference (High). - Yang, X., et al. (2021). R3Det: Refined Single-Stage Detector with Feature Refinement for Rotated Object Detection.

Link: PDF · ↩ back

Synopsis: Rotated bounding-box detection tailored for aerial imagery (e.g., DOTA).

Reliability: Well-cited arXiv work (High). - Han, J., et al. (2020). S2A-Net: Scale-aware Attention Networks for Rotated Object Detection. CVPR.

Link: PDF · ↩ back

Synopsis: Improves oriented detection with scale-aware attention heads.

Reliability: Top-tier conference (High). - Xie, X., et al. (2021). Oriented R-CNN for Object Detection. ICCV.

Link: PDF · ↩ back

Synopsis: Two-stage oriented detector; strong baseline on DOTA-style tasks.

Reliability: Top-tier conference (High). - Solovyev, R., et al. (2019). Weighted Boxes Fusion for Object Detection Ensembles.

Link: PDF · ↩ back

Synopsis: Averages boxes by confidence instead of suppressing; useful when merging tile outputs.

Reliability: Widely adopted arXiv paper (High). - Li, J., et al. (2022). GLIP: Grounded Language-Image Pre-training for Object Detection. CVPR.

Link: PDF · ↩ back

Synopsis: Vision-language pretraining that enables open-vocabulary detection via prompts.

Reliability: Top-tier conference (High). - Minderer, M., et al. (2022). Simple Open-Vocabulary Object Detection with Vision Transformers (OWL-ViT). ECCV.

Link: PDF · ↩ back

Synopsis: Text-promptable detector using ViTs; baseline for OVD.

Reliability: Top-tier conference (High). - Liu, S., et al. (2024). Grounding DINO: Marrying DINO with Grounded Pre-Training for Open-Set Detection. ECCV.

Link: PDF · ↩ back

Synopsis: Strong open-vocabulary detector; widely used for prompt-based spotting.

Reliability: Top-tier conference (High).